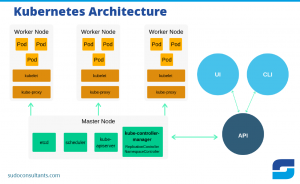

Kubernetes is an open-source system for automating the deployment, scaling, and management of containerized applications. It was originally designed by Google, and is now maintained by the Cloud Native Computing Foundation.

Kubernetes is often referred to as “k8s”, which is short for “Kubernetes”. The term “k8s” was coined by Google employee Brendan Burns. Kubernetes is one of the fastest-growing projects on GitHub, with over 30,000 contributors from over 10,000 organizations. Kubernetes has been adopted by companies of all sizes, from startups to global enterprises. Some of the world’s largest companies, such as Google, Microsoft, Amazon, and IBM, are using Kubernetes to power their cloud-native applications.

1. Sidecars

Sidecars are a great way to extend the functionality of Kubernetes without having to modify or redeploy your application. By adding a sidecar container to your Pod, you can easily add new features or services without affecting your existing code.

For example, you could use a sidecar to add a log collector or an application monitor. Sidecars are also a convenient way to run multiple versions of the same service in a single Pod. This can be useful for testing purposes or for providing backwards compatibility for older clients.

Overall, sidecars are a flexible and powerful way to improve the functionality of your Kubernetes applications.

2. Helm Charts

Kubernetes Helm charts are a package manager for Kubernetes that helps you install and manage applications on your Kubernetes cluster.

Helm charts are similar to Linux package managers like apt and yum, but they are designed specifically for Kubernetes. Helm charts can be used to install applications from scratch or to upgrade existing applications.

With Helm, you can install multiple versions of an application side-by-side, and roll back to previous versions if necessary. You can also use Helm to share your applications with others. To get started with Helm, you’ll need to download the Helm client and install it on your computer. Then, you can use the Helm client to search for and install Helm charts from public repositories like GitHub. You can also create your own repository of Helm charts and share it with others.

3. Service Discovery and Load Balancing

Service discovery is an essential part of any distributed system, and Kubernetes is no exception. In a nutshell, service discovery allows individual nodes in a cluster to find and communicate with each other. This process is essential for tasks like leader election and managing inter-node communication.

Kubernetes uses two main mechanisms for service discovery: DNS and environment variables.

DNS is the recommended method for service discovery in Kubernetes, as it provides a robust and accurate way to resolve service names to IP addresses.

However, environment variables can also be used in cases where DNS is not available or impractical.

In addition to service discovery, load balancing is also an important consideration in any distributed system. By distributing traffic evenly across all nodes in a cluster, load balancing helps to ensure that no single node is overwhelmed by requests. Kubernetes includes a built-in load balancer that can be used to distribute traffic among nodes in a cluster.

4. Cluster Federation

In computing, a federation is a grouping of distributed systems that fail independently and tolerate partial failure of the overall system. A key advantage of federation is that it allows for a scalable and decentralized management of a large number of components. One area where federation has been gaining popularity is in the management of Kubernetes clusters.

By federating multiple Kubernetes clusters, organizations can more easily manage a large number of nodes and avoid the single point of failure that can occur with a single cluster.

Additionally, federation enables different teams to manage their own clusters while still being able to share resources and information between clusters. As Kubernetes continues to grow in popularity, it is likely that cluster federation will become an increasingly important part of managing large-scale deployments.

5. Self-Healing

Kubernetes is a powerful tool for managing containerized applications at scale. However, even the most well-designed system can fail occasionally. That’s why it’s important to have a self-healing system in place that can detect and correct errors automatically. Kubernetes provides several features that make self-healing possible.

For example; when a pod fails, Kubernetes will automatically create a new one to take its place. This ensures that the application always has the minimum number of pods necessary to function correctly.

In addition, Kubernetes continuously monitors the health of all pods and restarts any that fail its health checks. These features make it possible for Kubernetes to recover from errors quickly and efficiently, keeping your applications running smoothly.

6. Automated Rollouts and Rollbacks

Kubernetes automates the process of rolling out new code changes to a production environment and then automatically rolling back the changes if there is any issue. This process is called a canary release.

Kubernetes rollouts and rollbacks can be done manually or automatically. The most common use case for manual rollouts is when Gray Release is used to do A/B Testing. With Kubernetes, there is no need for complex scripting or dedicated hardware infrastructure; you can easily update your application with zero downtime.

If something goes wrong with the new code, you can quickly and easily roll back the changes. This process allows you to rapidly iterate on code changes without compromising the stability of your production system.

7. Horizontal Scaling

So when you have a Kubernetes deployment, one of the things that’s really important is horizontal scaling. What that means is, you want to be able to scale your application by adding more machines, or instances, horizontally.

Now, the great thing about Kubernetes is that it makes this really easy to do. You can just add more replicas of your pods, and Kubernetes will automatically deploy them across your cluster. And if one of your instances goes down, Kubernetes will automatically bring up a new one to take its place. This is all managed transparently by the Kubernetes control plane. So horizontal scaling is a really important part of running a successful Kubernetes deployment.

8. Multi-tenancy

Multi-tenancy is a big word that basically means a bunch of users sharing the same infrastructure. In terms of Kubernetes, this means that different teams or departments can utilise the same cluster without interfering with each other. Each team gets their own sequestered space within the cluster where they can deploy and manage their own applications without affecting anyone else.

There are a few benefits to this approach:

- First, it saves money because you don’t need separate clusters for each team.

- Second, it’s more efficient because you can share resources like CPU and memory between teams.

- Finally, it’s more secure because each team’s data is isolated from everyone else. If one team’s applications go down, it doesn’t impact the others. Multi-tenancy is a key feature of Kubernetes and one of the reasons it’s become so popular in the enterprise.

Conclusion

Kubernetes is a powerful tool, and it’s only going to become more popular in the years to come. If you’re not already using it, now is the time to start learning about it and implementing it into your workflow.